iMAP

Implicit Mapping and Positioning in Real-Time

ICCV 2021

We show for the first time that a multilayer perceptron (MLP) can serve as the only scene representation in a real-time SLAM system for a handheld RGB-D camera. Our network is trained in live operation without prior data, building a dense, scene-specific implicit 3D model of occupancy and colour which is also immediately used for tracking.

Achieving real-time SLAM via continual training of a neural network against a live image stream requires significant innovation. Our iMAP algorithm uses a keyframe structure and multi-processing computation flow, with dynamic information-guided pixel sampling for speed, with tracking at 10 Hz and global map updating at 2 Hz. The advantages of an implicit MLP over standard dense SLAM techniques include efficient geometry representation with automatic detail control and smooth, plausible fillingin of unobserved regions such as the back surfaces of objects.

Overview Video

Method

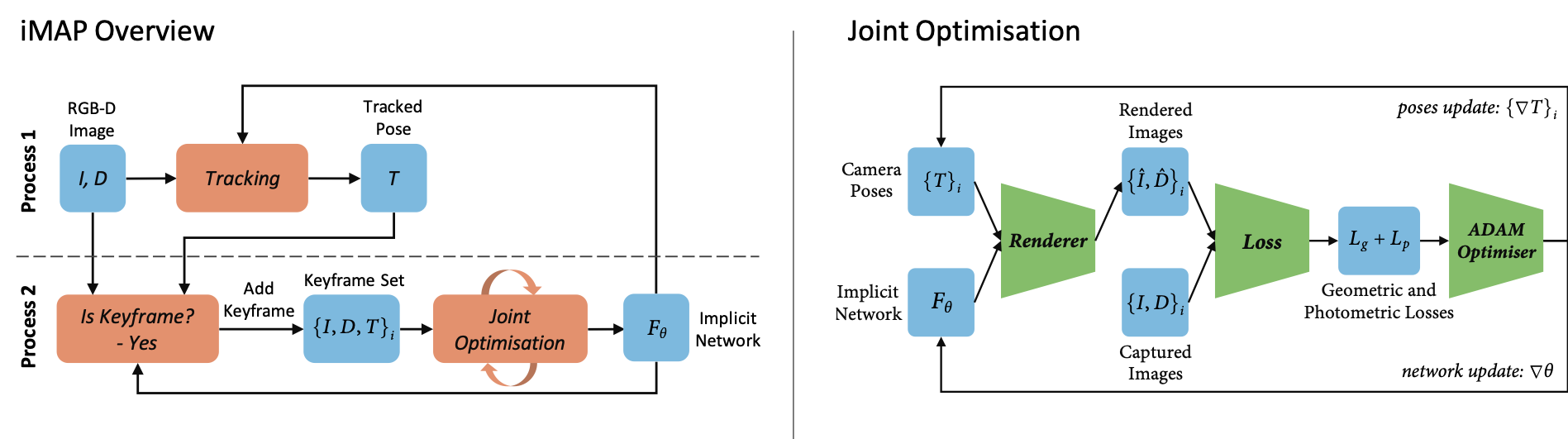

A 3D volumetric map is represented using a fully-connected neural network that maps a 3D coordinate to colour and volume density. We map a scene from depth and colour video by incrementally optimising the network weights and camera poses with respect to a sparse set of actively sampled measurements. Two processes run concurrently: tracking, which optimises the pose from the current frame with respect to the locked network; and mapping, which jointly optimises the network and the camera poses of selected keyframes.

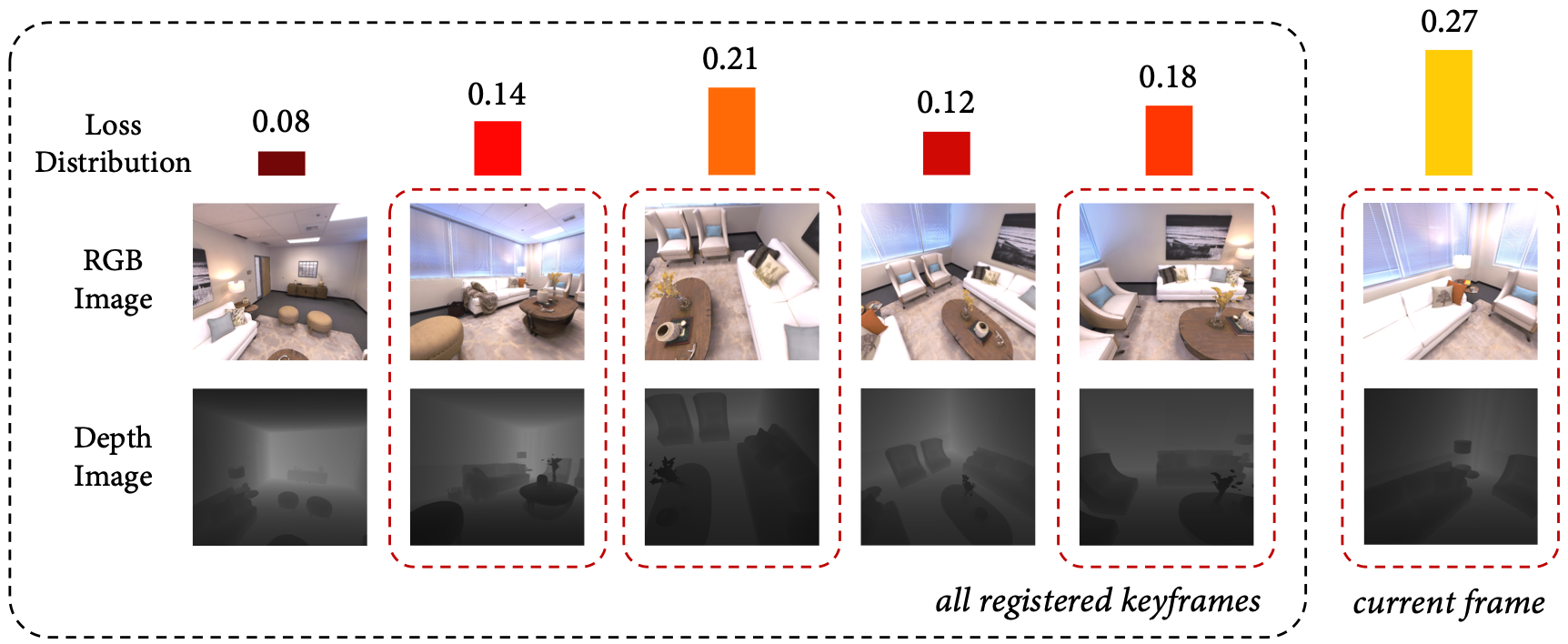

A set of keyframes selected based on information gain serve as a memory bank to avoid network forgetting. Every time a new keyframe is added, we lock a copy of our network to represent a snapshot of our 3D map at that point in time. Subsequent frames are checked against this copy and are selected if they see a significantly new region. We allocate more pixel samples to keyframes with a higher loss, because they relate to regions which are newly explored, highly detailed, or that the network started to forget. To bound joint optimisation computation, we choose a fixed number of keyframes at each iteration, randomly sampled according to the loss distribution.

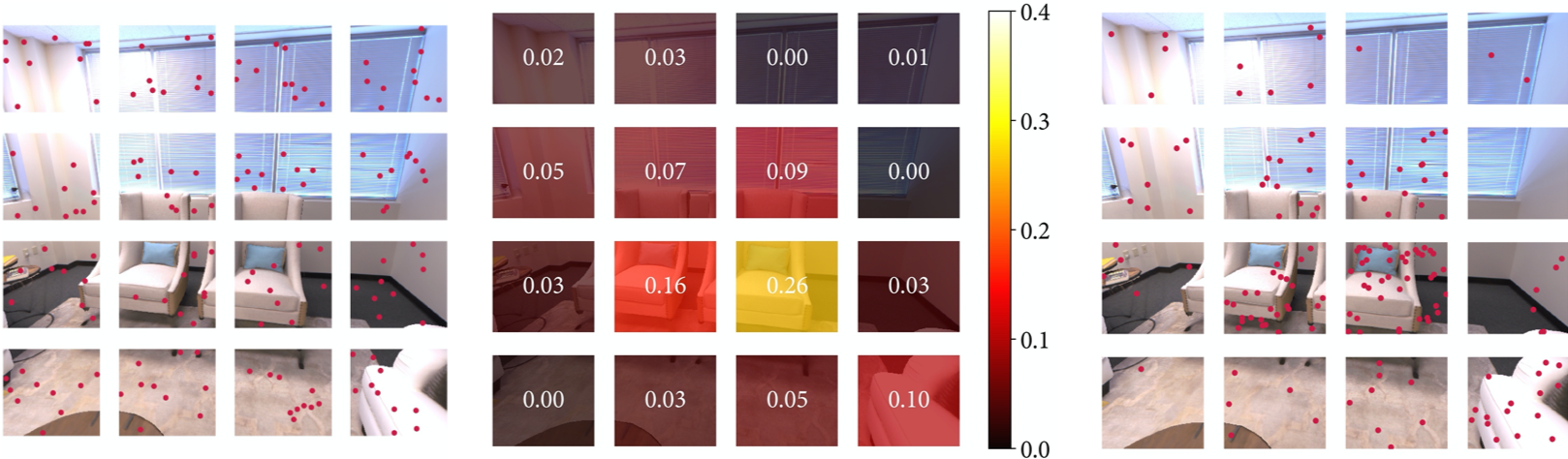

Rendering and optimising all image pixels would be expensive in computation and memory. We take advantage of image regularity to render and optimise only a very sparse set of random pixels (200 per image) at each iteration. We use the render loss to guide active sampling in informative areas with higher detail or where reconstruction is not yet precise. A loss distribution is calculated across an image grid using the render loss from a set of uniform samples, active samples are further allocated proportional to the loss distribution.

Additional Examples

Bibtex

@inproceedings{Sucar:etal:ICCV2021,

title={{iMAP}: Implicit Mapping and Positioning in Real-Time},

author={Edgar Sucar and Shikun Liu and Joseph Ortiz and Andrew Davison},

booktitle={Proceedings of the International Conference on Computer Vision ({ICCV})},

year={2021},

}

Contact

If you have any question, please feel free to contact Edgar Sucar